“I had with me many tools, and dug much within the walls of the obliterated edifices; but progress was slow, and nothing significant was revealed.”

– H. P. Lovecraft, “The Nameless City”

Gregory Weir, who fashioned the very nice piece The Majesty of Colors, has a new game with levels built out of existing texts, including “The Nameless City.” The new platformer is called Silent Conversation, a title taken from poet Walter Savage Landor’s description of reading.

My impression is that this one is not as short and compelling as The Majesty of Colors, but is more elaborate and is, if not a success, at least a very interesting failure. It’s not a particularly good or fun platformer qua platformer and doesn’t offer a very good model of the reading process, but it reveals more about about the potential of games as digital objects that can be both played and read. I don’t think that making levels out of pre-existing texts works nearly as well as would original writing, but the setting of those texts into interactive concrete was certainly done with care.

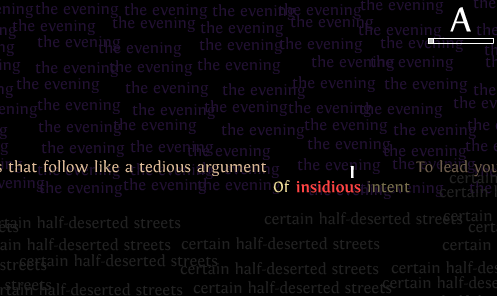

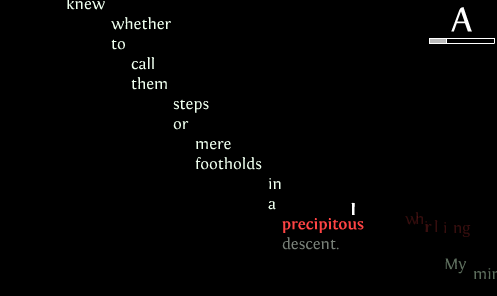

The model of reading is one in which you have to touch every word with your eye (your “I”, in this case) to seek a good score (represented as a letter grade, believe it or not). “Powerful” words fire slow-moving letters at you, which clear away your accomplishments on the screen if they hit you. In some places you can fall to your death and undo the reading on the screen, too.

If this were done as a parody of the reading process as conceived of in the educational system, it could work effectively. Playing the game does suggest to me things that I do when I read – looking back over the text for a word I missed or misread, trying to progress by looking at at least each phrase along the way. But the reading of these texts is numbed, rather than heightened, by having them as elements of play in this way. Just to stick to the mechanics, rather than looking to deeper aesthetic questions about reading: Words are either powerful or not, and I need to simply tag them in either case, carefully if they might undo some of my progress, quickly otherwise. Whether in a long fiction work or a short poem, I can, when reading on paper or e-book, skip back to re-reread without slogging back past each word, as I have to go in this game. And I can re-read sections when I’m done, too. While the phrases used as decorations add color and visual interest, this game, perhaps surprisingly, makes a less unilinear reading experience into a more linear experience in reading and playing. It also rewards a perfunctory glance and touch rather than requiring discernment, figuring out, and comprehension, as do some other video games made entirely out of words – works of interactive fiction.

Reading and playing are not two great tastes that taste great together in this case, but I appreciate Weir bumping them into each other in this piece and trying to figure out how they might enhance one other. And for those interested both in gaming and in digital writing/electronic literature, Silent Conversation is certainly required reading.